Abstract

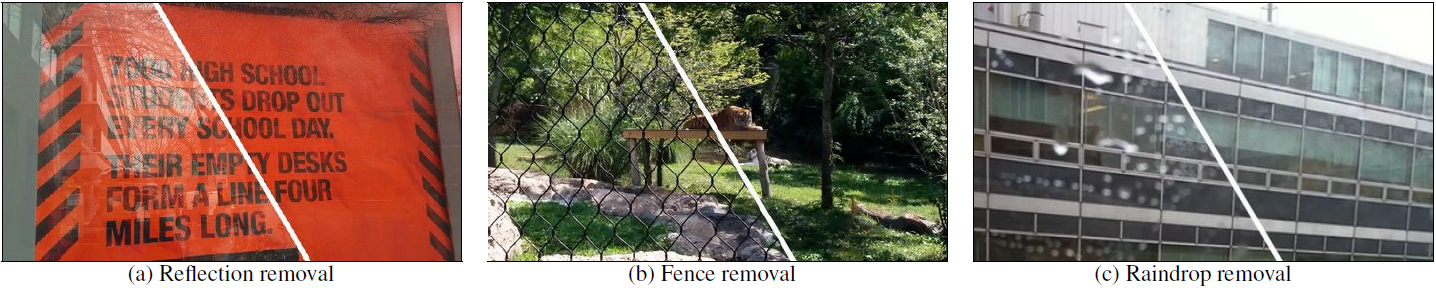

We present a learning-based approach for removing unwanted obstructions, such as window reflections, fence occlusions or raindrops, from a short sequence of images captured by a moving camera.

Our method leverages the motion differences between the background and the obstructing elements to recover both layers.

Specifically, we alternate between estimating dense optical flow fields of the two layers and reconstructing each layer from the flowwarped images via a deep convolutional neural network.

The learning-based layer reconstruction allows us to accommodate potential errors in the flow estimation and brittle assumptions such as brightness consistency.

We show that training on synthetically generated data transfers well to real images.

Our results on numerous challenging scenarios of reflection and fence removal demonstrate the effectiveness of the proposed method.

Citation

Yu-Lun Liu, Wei-Sheng Lai, Ming-Hsuan Yang, Yung-Yu Chuang, and Jia-Bin Huang, "Learning to See Through Obstructions", in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020

Bibtex

@inproceedings{Liu-CVPR-2020,

author = {Liu, Yu-Lun and Lai, Wei-Sheng and Yang, Ming-Hsuan and Chuang, Yung-Yu and Huang, Jia-Bin},

title = {Learning to See Through Obstructions},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition},

year = {2020}

}

Video

1-minute video

Two Minute Papers

References

- • The visual centrifuge: Model-free layered video representations, CVPR, 2019.

- • Accurate and efficient video de-fencing using convolutional neural networks and temporal information, ICME, 2018.

- • A generic deep architecture for single image reflection removal and image smoothing, ICCV, 2017.

- • Robust separation of reflection from multiple images, CVPR, 2014.

- • Exploiting reflection change for automatic reflection removal, ICCV, 2013.

- • Video reflection removal through spatio-temporal optimization, ICCV, 2017.

- • A Computational Approach for Obstruction-Free Photography, SIGGRAPH, 2015.

- • A deep learning approach for single image reflection removal, ECCV, 2018.

- • Single image reflection separation with perceptual losses, CVPR, 2018.